We are asked to evaluate our own radio show and one other.This feels an important element of learning on DS106, but harder to offer and get in this Headless version of the course than it might be if we were doing this course for credit. Alan Levine reflects on the difficulty of giving feedback at the right level in a hashtag classroom. He concludes his post on this with some interesting questions:

Can a community fill some of that feedback role so an instructor does not max out? Or in what ways can the class itself pick up its own feedback circle without it being a thing being done just for the credits?

I think this area that merits a thoughtful response – and the best way to do that is to jump in and offer some feedback, as the week 9 assignment asks me to do. I will post later on what I believe to be a methodological approach that may offer an answer – briefly, I believe that Self Managed Learning offers a potential model. I have been teaching using this model face-to-face for 20 years and believe it would translate to the hashtag classroom well with few adaptations.

A core issue on this Headless 13 course is that as it is not done for credit – it is permissible to do as much or as little as anyone wants and there are no consequences for non-compliance with assignments . Furthermore, what is delivered meets self-set criteria not externally or collaboratively set by the cohort. The issue of how we learn, how we reach understanding and how we meet quality criteria for learning is complex and many-fold involving a 4 point diagram that includes content, teacher, learner and context where learning happens. This further depends on personal beliefs about how these elements interact. Is learning social or personal or both? do we subscribe to the conduit metaphor of knowledge or to a metaphor of learning as participation? These will be a topic for another post.

cc licensed ( BY NC SA ) flickr photo shared by Dave Sag

Here I jump the donkeys carrying computers, and evaluate ‘Shrinking the big questions’ and ‘Spinning around’ by answering the questions our weekly announcement set. I trust that my capacity for critical thinking and feedback going back a long career will suffice to do this task justice. There is little in DS106 material, beyond informal conversations and suggestions, that supports open participants in learning how to do this. Gardner Campbell suggested at Open VA recently that we could do worse than follow Wikipedia behavioural guidelines when working in the hashtag classroom. The decline of participation in the wikipedia project not withstanding, may be this is a good starting set of rules for online evaluation.[ At least until I write the definitive post on online feedback and why it will never work.]

My observations so far are that peer celebration is more the order of the day in DS106 than clear and specific feedback. The best feedback of this kind I have received has come from Alan Levine, who though not an instructor of Headless 13 is certainly an ‘elder’ active in our little community.

Let me be clear, I have nothing against peer celebration. I do believe we need ego boosting as well as notes for change and improvement.

The issue for me is that as we have light reciprocal relational links, I am unsure I know anyone well enough to be able to offer improvement feedback in a way that can be heard and may be wanted. I am also unclear about standards for assessing and evaluating, both my own work and that of others.

I am unsure what I can offer will be welcomed.

My initial observations of the norms operating our community suggests to me that whatever we do is a ‘pass’ and that better/worse are not comparators that it uses explicitly. For myself, Alan telling me ‘avoid x and try y instead’ helped me improve the quality of my output and that is why I engage with others to learn. I welcome more of that from others and whilst I have had great help when asking about a ‘how-to’, I have had less feedback evaluating my work against a set of standards than I might on a for-credit classroom.

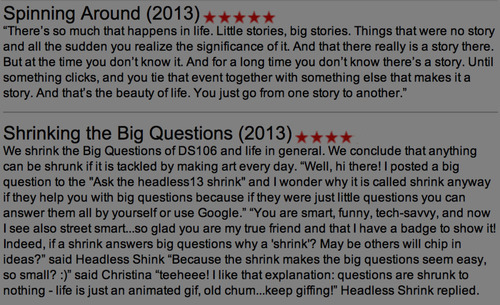

We do implicitly evaluate work in DS106. This week we were asked to nominate somebody’s work to the Inspire website. I had already done so before reading the request – I nominated ‘Spinning Around’. The frame used is ‘nominate work you found inspirational’. This does give a clue as to evaluation norms in the community. Work is not better or worse, but sometimes people make art that inspires us. I can do that but that does not teach me (or does it?) how to make my own work better.

I make stuff that sucks, I am happy to have a go. However, my trying does not make it good art. It just makes me good at trying. External evaluation matters if I am to improve and learn beyond my own limitations.

Our radio team gelled behind the shared aim to produce a great show:

@TalkyTeam our show was: ‘funny, absurd, and very DS106!’ praise indeed says @Jess_TheObscure a post with audio. Loved it #radiogaga

— Mariana Funes (@mdvfunes)

and I feel we succeeded.

How did we evaluate each other’s work? We made sure we spent time relating and laughing together. Good old fashioned ‘let’s get to know each other a bit’ before we focus on the task at hand. We agreed working norms that would enable us to produce the show and stuck to them.

We had plenty of opportunity to offer each other feedback. What I noticed was that it was never personal, it was always a measure against quality output. For example, I spent a few hours trying to clean up Jess’s audio. I did what I could, but was not happy with the output. Karen also offered specific feedback in the shape of ‘the echo is just too much, should we ask Jess to re-record?’. By then, Jess has already heard the edited audio and was on the case to re-record and learn Audacity. Another example was the show’s introduction. I had recorded an introduction early on, and was pretty chuffed with it. Of course, the introduction had been done in isolation of the show as it evolved. I woke up one morning, to find lots of Tweets that essentially said ‘introduction is too long, need to re-record or drop from show’. After picking up my hurt ego off the floor – not really – I tweeted that I thought we should not drop it as the show needed an introduction and that I would re-record. I was ruthless in editing the script, did it again and it was all the better for it.

It has also been easy to keep on working together and offering feedback on each other’s output. We are now even evaluating our own work as we send it out – I can hear when the sound is not right, I can offer my evaluation and then we use if usable and short of time or just redo if we can. We got our evaluation process streamlined and it has enabled us to produce another episode of our show for Halloween. Just because we wanted to.

Below I take a stab at answering the evaluation questions exactly as week 9 announcement requests. I am doing this to encourage us all to do more realistic and specific evaluation as well as keep on with peer celebration just because we are motivated to create something each day. This is awesome.

What would make it even more awesome, for me at least, it to have us engage more on evaluating the quality of what we are producing and critically engaging with criteria for evaluation – what can I do

- less of

- more of

- or continue doing

to make my DS106 work better each day?

I believe the weekly announcement suggest we should be engaging on this conversation beyond the undeniable fact that our motivation to keep making stuff is inspirational and exercises the creative muscle.

Criteria for audio and the extent to which Shrinking the big Questions (SBQ) and Spinning Around (SA) met them

Quality of audio sound – e.g. Is the volume appropriate? Are the levels even? Is the sound clear, and free of noises not needed (e.g. mouse clicks, background noise)?

To my untrained ear SA had the best sound quality of all the shows. I listened to it over and over and enjoyed its crisp sound each time. There was a flow to the sound, silences were just long enough not too long or too short. The whole thing cohered to give the feel of one song with many voices with each voice clearly heard.

SBQ struggled with getting the noise even, when I heard the whole thing at the European Premiere I noticed there were extraneous noises in the different segments, at some point you can hear somebody tapping at a keyboard. We each mixed our own segments, where SA had one person mixing from the raw sound. What would make it better? I learnt that much care has to be taken in the quality of that raw data, there is only so much you can do when you edit something – this was so clear with the DS106 controversy segment. The second recording, made with a higher quality microphone, was of far higher quality and easier to edit than the first.

Quality of audio editing – use of effects, transitions, are the edits clean?

I need to learn more how to fade in and out. I learnt, in looking at how others edited, that I cut in/out in too sharp and abrupt a manner. I do not understand enough about the physics of sound to manipulate in any meaningful way beyond trial and error. I also need to learn more about the mechanics of microphones and fine editing.

We were saved in SBQ by our overall editor having a self-confessed perfectionistic streak. Credit goes to her for going through many a tutorial to learn ways to improve our sound. Talky Tina and Christina know more about sound clearly, and though I have not asked Karen, I think their sections will have given our sound editor much less hassle than say mine.

I am now learning to use effects more easily, music for transitions, and smoothing out segments. SA uses effects and transitions in such a way that there is not sense of separate sections – it all blends easily to my ear and with so many voices I am inspired by the editing and the quality of the raw sounds the group produced.

Use of sound effects- How are they used? Is it effective?

Yes, I think we both used sound effects effectively. I did not use them in my section, and their lack shows. I ran out of time to add them in and I had not yet learnt how to do it well – so decided to go without. SA used repetition staggered to great effect. Reading the lyrics of the song spoken just after the the song is played – very powerful. Of note in SBQ are the sound effects on the cooking section of the show and on the meaning of life section – they are clear, appropriate and timely.

Use of music- how is it used? Is it effective or distracting?

Music added in SBQ worked well. I did not use music for same reason as above – not enough knowledge of how to mix. Music added by Karen and Talky Tina really helped bring the show together and taught me how important it was to include it so as to keep attention of listeners. I need to work on this and SA were an example of best practice in the use of music.

Does the show have a structure? Is it cohesive or does it feel stitched together?

SBQ could have felt stitched together, and may be it does a little. Talky Tina’s interventions throughout create a thread, the bumpers and commercials do also – I did not see the point of them at the start of audio weeks – and references to other segments within the show help the flow.

However, our topics and styles were very different and had the team work not been as good as it was, the knitting together may have failed.

Interestingly, SA’s editor Rochelle also felt that their approaches were different and an approach was needed to cohere a show. Perhaps this is the craft of editing? SA tackled the coherence issue with music that flowed through the whole show. I loved the one song SA used, but the whole thing would have been lost on somebody who may be did not like the song as it was repeated over and over again. A high risk strategy for coherence that paid off for my untrained ear at least. I would have like more narrative about the ideas and the making of it – some of what came through in the blog post could have made another 15 minutes of the show and may have given us a coherent analytical message after the emotional and on-linear message had been heard.

Does it tell a story effectively? Is there a sense of drama, unknown? Does it draw you in to listen?

SA absolutely and I could not think of anything that would enhance it. SBQ was not about drama but humour and thought provoking ideas, it did draw me in. I chose to listen to it for fun and each time I hear it I smile. Yes it tells a story effectively but it is too long.

If i were thinking of it a a ‘proper’ radio show I would structure it as 10 minute episodes each tackling only one question. Music and other programming would be the main focus, and SBQ would be short ‘funny, absurd, and very DS106!’ morsels spread through the day.

If you would rate this radio show, how many stars out of five would you give to the show

I answer this question with a little help from Photoshop and Hackssarus.

I worry about upsetting my team by giving us one less star, I worry about the other teams thinking I chose this show because I liked it, but that may be I did not like them as much. I worry about offending both teams I talk about here with some of my suggestions for improvement. It is hard to offer meaningful evaluation to others online, I find it easier to self-evaluate. But unless I hear external voices, I worry I am in a digital echo chamber not learning new things just hearing what I want to hear.

What has helped the process is the closeness of our small group work and getting to know people. If we understand people’s motivation, it makes it easier to offer feedback that can be heard. Just because I want improvement feedback, and because DS106 announcements suggest we should learn how to evaluate ourselves and others effectively it does not mean that everyone involved in DS106 Headless 13 wants evaluation.

Some may just want a space make art and not a space to be evaluated.

I need to tackle the issue of assessing others’ work.

- How can criteria for assessment be agreed online?

- How can we teach online participants to assess each other with rigour that comes out of engaging with the issue of setting clear standards?

Why? Selfishly, if I want to use this kind of model to support my own students then we need to be able to assess each other’s work to Masters standards and do so in a public setting. Self-managed learning offers a set of procedures that may help, but the group process and norm setting issues remain.

This feels an insurmountable challenge to introducing open education in my environment right now. I hope to learn more about effective ways to evaluate my own work and that of others as we move to the final (final?) stretch of this DS106 Headless.

Leave a Reply